After more than a decade of staying exclusive to the PlayStation consoles, developer Naughty Dog’s The Last of Us has finally arrived on PC, embracing all the best and the worst aspects of the platform. The game was released on PC two weeks ago, and I got a review code on launch day. So why have I waited this long to write this review? Well, if you’ve been following the developments closely, you’re no stranger to the myriad of faults that this release brings with it. The game launched in a broken state, with bugs and tons of performance issues that made it unplayable on anything but the highest-end systems. As of the time of writing, Naughty Dog has deployed multiple hotfixes and one giant 15GB patch that has improved the game’s stability a significant amount. Regardless though, this PC port still has a long way to go if it wants to live up to the generally excellent PC ports from PlayStation (see: Spider-Man, Returnal, Days Gone).

The Good, the Bad and the Ugly — TLoU PC vs PS5 Graphics Explained

Great Scott! This shader compilation is a nice CPU stress test. I'm guessing most of the current Steam reviews are bad because people started playing it before the compilation?

— Rahul Majumdar (@darthrahul) March 29, 2023

The graphics menu, though, has TONS of options; looking good there.#TheLastOfUsPC pic.twitter.com/M75uUNZn0P

Right from the first moment you boot the game, you’re greeted by the ol’ (un)reliable shader compilation progress bar at the bottom. You can start the game before letting it finish if a stutter fest is what you’re looking for. The shader compilation hammers your CPU while all you can do is hope your cooling solution can handle the heat, and if you’re sporting an older, weaker processor, then pray to the PCMR gods. In my case, my humble Ryzen 7 3700X, which celebrates its 4th anniversary this year and is broadly equivalent to the CPU in the PS5, took almost 40 minutes cooking shaders. Things don’t look good here.

On the flip side, the graphics menu offers a ton of options, more than the average PC port. Almost every effect is scalable here, from animation quality to character rendering to environmental effects and reflections. I suspect once Naughty Dog and Iron Galaxy have fixed the game’s core streaming issues; it should make for a great first experience for those jumping into the franchise for the first time. Right now, while the graphics menu looks great, it doesn’t correctly reflect the processing load its elements present to the PC’s components.

The Last of Us PC Performance Benchmarks

I played the game on my PC rigged with an Nvidia Geforce RTX 2060 Super and an AMD Ryzen 7 3700X CPU paired with 32 GB of RAM. That last bit is important, as this appears to be the first proper new-gen game that stretches the 16GB recommended buffer that most, if not all, PC games have standardised in the last decade. The PS5 has 16GB of shared memory, out of which roughly 12GB is accessed by games. In addition to that, the console also includes a dedicated hardware decompressor that aids in loading assets into memory so the APU isn’t bottlenecked. On PC, that ability to decompress assets is handled by the CPU, which adds more render time.

This could’ve been avoided to a point had the developers implemented DirectStorage, which is currently only supported in Forspoken. Regardless, the way the game loads in assets in the background as of the time of writing is, well, kinda broken. This means that even after the critical shader compilation at the start, the game is constantly loading in new assets in the background as you play, hammering the CPU while, at the same time, hoarding up more system resources that are required.

The Last of Us Part 1 has a really weird memory management system, if you have an 8GB GPU, the game will seemingly reserve 1.6GB for the OS, even if Windows isn't using anywhere near that amount.

— Charlie (@ghost_motley) April 2, 2023

If you have a 24GB GPU, it reserves nearly 5GB of VRAM for the OS.

It's like they…

Honestly, benchmarking the game at this point is quite pointless. While I could say that I’m getting around 70–90 fps with high settings and DLSS turned on, the play experience is something else entirely. Slapping DLSS on a broken port isn’t an ideal solution, and it’s something more developers need to take into account.

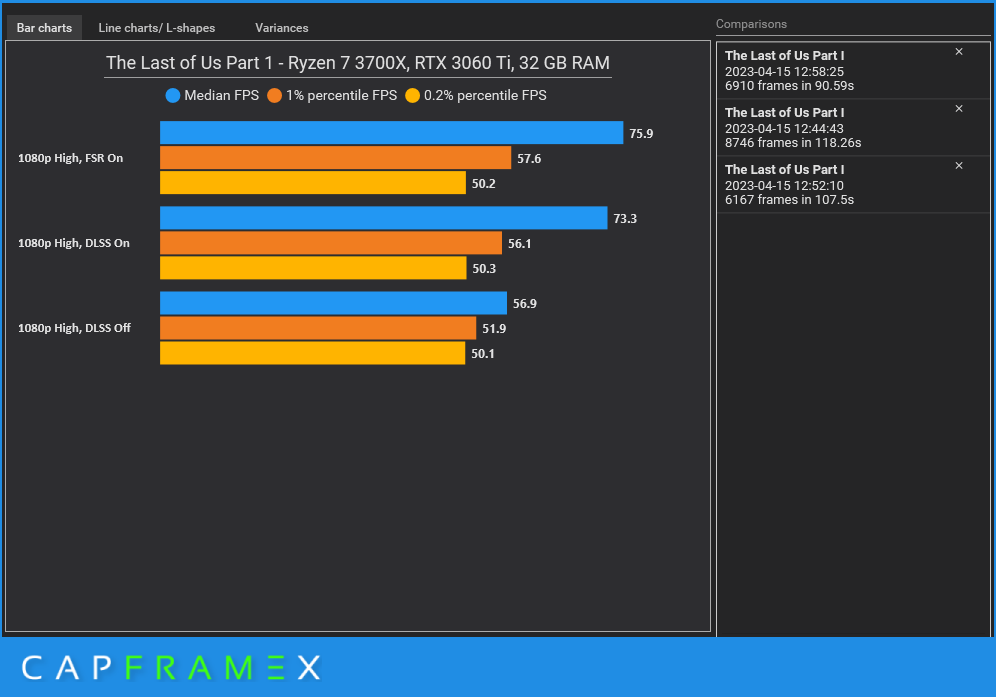

Update: Now that the game is in a stable state, I’ve put together some benchmarks at various settings. The general experience is still not as stable as I’d hoped, but at least now you can actually play and enjoy the game, provided you have decen

If you have an NVIDIA GPU, keep DLSS turned on — not just for better performance but also for better image stability at appropriate resolutions. The game’s default anti-aliasing solution isn’t perfect, and the only way to change it is with either DLSS or FSR. Speaking of, AMD’s FSR 2 works well here, producing better image quality than I would’ve expected it to.

On the latest patch, v1.03, the game is quite stable, with around 18GB of RAM usage under tense scenarios. Yes, we’ve now flung past the 16GB standard recommendation, that too at just 1080p! While it’s reasonable to expect that the base mechanics of the game originate from 7th-gen console game design and thus should be playable on lower-end hardware today, the nature of the remake and how it handles data streaming on the PS5 muddies the waters. My Ryzen 7 3700X, which is roughly equivalent to the processor found in the PS5, is the core of the bottleneck here in trying to achieve high, nay, standard frame rates. Due to the constant streaming nature of the game, it’s extremely taxing on the CPU at virtually all times, with 70–80% usage across most cores simultaneously. This is not a game where you’ll get away with maxing everything out at “Ultra” and soar high in the clouds with a buttery smooth frame rate.

Read More: The Last of Us Part 1 (Remake) Is Great, but Is It Worth the PS5 Premium?

The Perks of Being a PC Port

Of course, while being a PC port has its downsides, ones that are more frequent these days, it also opens up a world of new possibilities previously unimaginable on a console. Yep, you know where I’m going with this — mods.

One particularly interesting one is the addition of a first person mode to the game, thanks to a work-in-progress mod by VoyagersRevenge. Check this out, doesn’t it look so fucking cool?

It’s cool stuff like this that keeps people engaged on the platform, more so than the usual allure of better performance and options. Speaking of, the game’s visual makeup on PC offers, on paper, much more scalability across visuals than on PS5. You get better draw distances, better reflections and refractions, character and environment details, shadow details and light bounces. The only issue — texture quality. Inherently, the quality of material textures in this PC port is far behind those found on the PS5. Max out the settings all you want with as much VRAM you can throw at it; the game simply doesn’t look at good as its console counterpart. It’s a particular issue that I hope gets fixed soon, and looking at Naughty Dog’s frequency of updates, it will.

The addition of keyboard-and-mouse as inputs fucks with my brain in weird ways — Joel and Ellie don’t seem to be programmed for rough input like that, with the high fidelity of animations masking the dead zones of movement that direct directional input brings to the table. Of course, it makes shooting zombies easier and quick-time events funnier. Playing it with an Xbox controller? Holy shit, we’re living in a parallel universe. I was going to complain about mouse acceleration and on-screen camera jitter, but those have been patched, so the game looks and feels significantly better to control.

Verdict

The Last of Part 1’s PC Port has its fair share of issues, a diamond in the rough which should soon evolve into a great version of a beloved classic once Naughty Dog has patched it to perfection.